Pigmento: Pigment-Based Image Analysis and Editing

Paper: PDF, 300dpi images (15 MB) | PDF, full size images (45 MB)

Code: GitHub

Presentation: Keynote (200M) | PDF (140M) | PDF with notes (140M) | Video: YouTube or MP4 (50M)

Supplementary material (additional results and multi-spectral decomposition data):

Zip (300 MB)

Abstract:

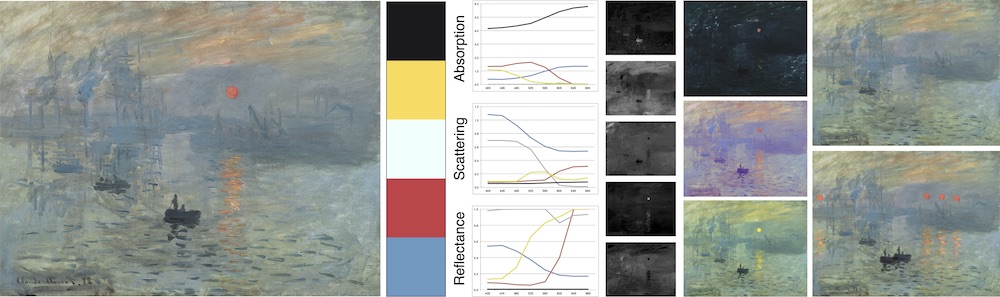

The colorful appearance of a physical painting is determined by the distribution of paint pigments across the canvas, which we model as a per-pixel mixture of a small number of pigments with multispectral absorption and scattering coefficients. We present an algorithm to efficiently recover this structure from an RGB image, yielding a plausible set of pigments and a low RGB reconstruction error. We show that under certain circumstances we are able to recover pigments that are close to ground truth, while in all cases our results are always plausible. Using our decomposition, we repose standard digital image editing operations as operations in pigment space rather than RGB, with interestingly novel results. We demonstrate tonal adjustments, selection masking, cut-copy-paste, recoloring, palette summarization, and edge enhancement.

BibTeX (or see the IEEE page):

@article{Tan:2018:PPB,

author = {Tan, Jianchao and {DiVerdi}, Stephen and Lu, Jingwan and Gingold, Yotam},

title = {Pigmento: Pigment-Based Image Analysis and Editing},

journal = {Transactions on Visualization and Computer Graphics (TVCG)},

volume = {25},

number = {9},

year = {2019},

publisher = {IEEE},

doi = {10.1109/TVCG.2018.2858238},

keywords = {painting, color, RGB, non-photorealistic editing, NPR, kubelka-munk, pigment, paint, mixing, layering, image, editing}

}

Funding: This work was supported in part by the United States National Science Foundation (IIS-1451198 & IIS-1453018), a Google research award, and a gift from Adobe Systems Inc.